Containerize VMware PowerCLI and Google Cloud Tools for PowerShell

One of the benefits of running applications inside containers is to help eliminate problems that could arise with underlying dependencies. For instance, when system administrators run PowerCLI automation scripts, there could be dependencies on operating system, PowerShell, and PowerCLI versions, not to mention any necessary modules and code. And if a Google Cloud administrator needs to use Google Cloud Tools for PowerShell, there is a dependency on gcloud CLI, which is used for authentication.

Wouldn’t it be great if you could build a Docker container with all those elements so that a known, tested combination could be put into reliable service for your infrastructure operations? There are different ways to go about this, and I tried several approaches, but in this article I’ll share the details of a straightforward way to satisfy the dependencies so you can build your own custom Docker container with the components you need.

Building the Container

To simplify the gcloud CLI dependency, just start with the official Google Cloud CLI Docker image, which is Debian-based in a few different variations depending on what other utilities you may want to have available.

Next, add the official Microsoft PowerShell package repository to the APT sources list in order to directly install the latest version with apt-get.

After that, use Install-Module to add PowerCLI and Google Cloud Tools for PowerShell. From there you can add necessary code using the Docker COPY command or fetch from an online source.

Check out this example Docker file that shows how it all fits together.

Running the Container

Once the container image has been successfully built, and typically it would be deployed to an internal container registry, you are almost ready to run it. In order to authenticate to Google Cloud, you will need to create a service account that has the IAM roles needed for your use case - which may include GCVE, GCE, Cloud Storage, or other Google Cloud services – and you need to create a JSON key for the container to use for authentication.

Protect the key file and do not be tempted to copy it to the container image! Instead, you can put it on the container host and mount the file as a Docker volume or use another secrets management approach that is supported by your particular container orchestration platform.

As is typical with container-based applications, there are several environment variables you can set to specify where gcloud CLI should find the key file along with the Google Cloud project and zone to use. This can be done in an .env file, on the docker command line, or perhaps in another manifest file if you are using a more advanced container orchestration platform.

These are the specific environment variables that are relevant, but other use cases may need additional variables:

CLOUDSDK_AUTH_CREDENTIAL_FILE_OVERRIDE CLOUDSDK_CORE_PROJECT CLOUDSDK_COMPUTE_ZONE CLOUDSDK_COMPUTE_REGION

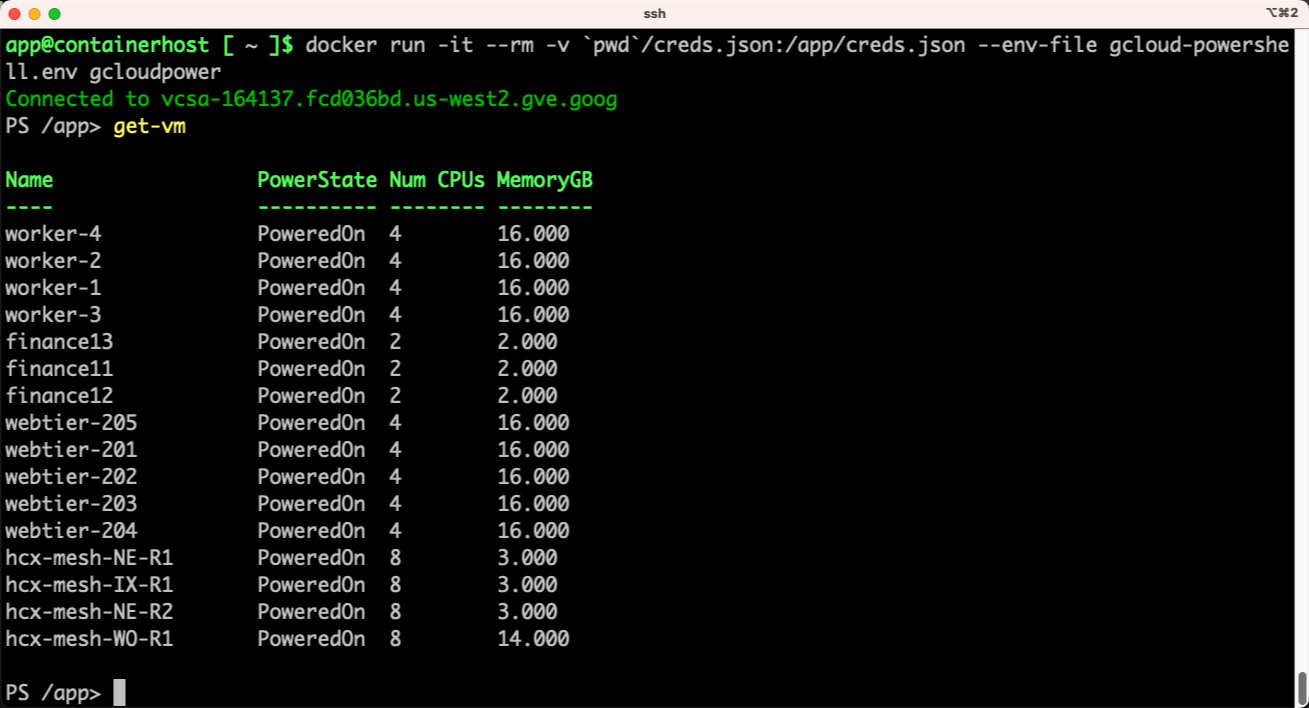

Set those in an appropriate configuration file and start the container. If using simple Docker, it would look like this:

docker run -it --rm -v `pwd`/creds.json:/app/creds.json --env-file gcloud-powershell.env gcloudpowerDemo

Takeaway

If you want to encapsulate your Google Cloud VMware Engine management and automation tools in a container image, one easy approach is to start with the official Google Cloud CLI image, enhance it with the latest PowerShell, and add the modules you need for your environment. Authenticate with a service account using the principles of least privilege when assigning IAM roles and protect the key file from unauthorized access.