TKG Service Routing Options on VMware Cloud on AWS

Service Routing Options for TKG on VMware Cloud on AWS

Once you’ve deployed a Kubernetes cluster, a common next step is to build some network connectivity to it so you can access your applications. If you’ve deployed a Tanzu Kubernetes Grid (TKG) cluster on VMware Cloud on AWS, you’ll have several options for the service routing. This post will walk through the different options available on VMware Cloud on AWS.

The Control Plane

Before we dig into the particulars of service routing, it’s important to note that we’re not focusing on accessing the control plane nodes. Each TKG cluster deployed can be configured as a development cluster or a production cluster. The primary difference between the two is the number of control plane nodes. Development clusters have a single control plane node, while a production cluster has three nodes for redundancy. Regardless of what you use for your service routing mechanism, the control plane nodes are load-balanced by Kube-vip. This VIP is controlled by a container running on each control plane node that advertises the cluster endpoint. Your KUBECONFIG is configured with this endpoint to access the cluster. You can see the Kube-vip containers running by listing the pods from the kube-system namespace.

You can also identify which control plane node owns the VIP by looking at the vSphere UI. One of the control plane nodes will have the cluster endpoint listed.

HostPort

The first option we’ll discuss may be the least likely to be used. Sometimes a deployed pod needs to be accessed on a specific port on the host. At one point, some Ingress controllers used ports 80/443, which used a hostPort. HostPorts are possible on VMware Cloud on AWS but should be avoided if possible. See this link about configuration best practices from the kubernetes.io site.

NodePort

If you configure your Kubernetes services of type NodePort, Kubernetes will choose a port in the 30000 – 32767 range. A benefit to using NodePorts, specifically over HostPorts, is that any node can receive the network traffic and route it to the correct container on the cluster, regardless of whether it resides on that host or not. The container networking solution (either Antrea or Calico for TKG clusters) can route the traffic to the correct node with the residing container.

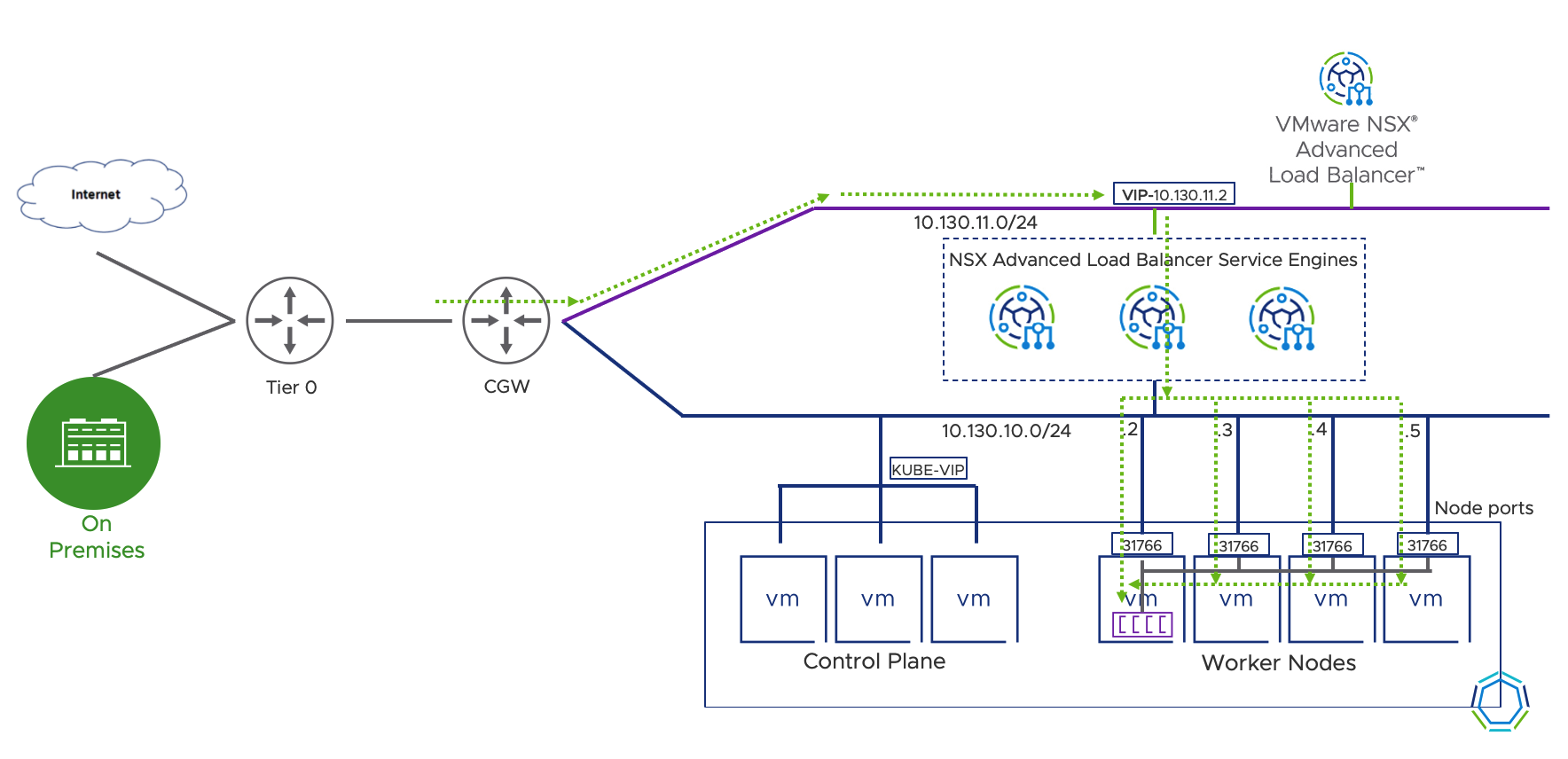

NSX-T Advanced Load Balancer

While NodePorts are preferred over HostPorts, they still have drawbacks. For instance, you can now connect to your app by accessing any node in the cluster, but what if the cluster’s nodes are upgrading, deleting, scaling, etc.? As TKG upgrades the cluster or scales nodes, the VMs that are part of the cluster might change, including their IP Addresses.

A perfect solution for this is to use VMware’s NSX Advanced Load Balancer. The NSX Advanced Load Balancer will update the node pools as they change and provide a single Virtual IP Address (VIP) for you to set a DNS entry for and access. Platform teams can apply a service of type LoadBalancer to the Kubernetes cluster, and the NSX Advanced Load Balancer controller provisions a layer four load balancer through the service engines. The service engines can also scale up as traffic increases so that you won’t overload the load balancer.

NOTE: NSX Advanced Load Balancer can also do Layer 7 load balancing with an Enterprise license.

NSX Advanced Load Balancer with Ingress

If you’re looking for a layer seven ingress controller, you can continue using NSX Advanced Load Balancer to route traffic to your cluster nodes, just as explained in the previous section. After completing that setup, you can deploy an ingress controller such as Contour into your cluster and publish it with a service of type LoadBalancer. This setup gets traffic from the physical/virtual network to the Kubernetes nodes running your ingress controller. From there, you can apply httpproxy or ingress manifests to configure your ingress controller for layer seven rules.

Below, I’ve deployed the Contour ingress controller as well as a web service and published them both with services of type LoadBalancer. You can deploy your preferred ingress controller or skip the ingress controller and use the NSX Advanced Load Balancer directly (either layer four, or layer seven if you have an Enterprise License), depending on your use case.

![]()

Summary and Additional Resources

There are plenty of options to route traffic to your Kubernetes clusters on VMware Cloud on AWS. NodePorts can be accessed directly, or load balanced with a tool such as the NSX Advanced Load Balancer. You can also use ingress controllers if you’re looking for layer 7 load balancing rules. HostPorts are possible but not recommended.

Additional Resources

Configuring NSX Advanced Load Balancer with TKG on VMware Cloud on AWS

Tanzu Kubernetes Grid Deployment on VMware Cloud on AWS

Changelog

The following updates were made to this guide.

|

Date |

Description of Changes |

|

2021-07-15 |

Initial publication |

About the Author and Contributors

Eric Shanks has spent two decades working with VMware and cloud technologies focusing on hybrid cloud and automation. Eric has obtained some of the industry’s highest distinctions, including two VMware Certified Design Expert (VCDX #195) certifications and many others across various solutions, including Microsoft, Cisco, and Amazon Web Services.

Eric’s acted as a community contributor through work as a Chicago VMUG Users Group leader, blogger at theITHollow.com, and Tech Field Day delegate.

- Eric Shanks, Sr. Technical Marketing Architect, Cloud Services Business Unit, VMware